Why Financial Advisors Choose AI Tools That Don't Record

Why advisors are switching to non-recording AI tools: consent laws, compliance risks, and what to look for.

AI tools for financial advisors can save hours every week. But not all of them work the same way. The difference between a tool that records audio and one that doesn't has direct consequences for compliance with consent law, data privacy, regulatory recordkeeping, and client trust.

Recording-based tools capture and store live audio, then transcribe and summarize it. Non-recording tools may still listen to conversations and transcribe them in real time, but they never save or retain audio files.

Both approaches deliver real-time savings. The question is which approach fits your practice, your clients, and your compliance policy.

This article breaks down why a growing number of advisors deliberately prefer non-recording AI tools. You'll learn about the data security concerns, litigation risks, the client trust factor, and what non-recording AI tools look like in practice.

Key Takeaways

- Over a dozen U.S. states require all-party consent to record conversations. Non-recording tools eliminate this obligation entirely for advisors serving clients across state lines.

- Recording a meeting creates a regulated record subject to retention rules under SEC Rule 204-2 (commonly known as “Books and Records”). It also adds a third-party vendor data security risk and can hold clients back from sharing sensitive financial data.

- Advisors don't have to choose between productivity and privacy. No-record AI tools deliver summaries, CRM updates, and follow-up emails without creating a permanent audio record.

The Consent Law Problem with Recording Client Meetings

Recording a client conversation, whether by a person or an AI tool, triggers federal and state wiretapping and privacy laws.

Under U.S. federal law (the Electronic Communications Privacy Act / Wiretap Act), one-party consent is the baseline.

But 12 states have a stricter standard. They require all-party consent, meaning every participant must be informed and agree before recording begins. Those states are: California, Connecticut, Delaware, Florida, Illinois, Maryland, Massachusetts, Montana, Nevada, New Hampshire, Pennsylvania, and Washington. Michigan's status is unsettled.

When participants sit in different states, the safest legal approach is to apply the stricter standard.

AI tools that create voiceprints to identify speakers may separately trigger the Illinois Biometric Information Privacy Act, which requires written consent before collecting biometric identifiers. This is a separate obligation from general recording consent.

Under SEC Rule 204-2, registered investment advisers must make and keep records of client communications for at least five years. FINRA Rule 3170 separately requires certain firms to record and retain all client calls.

None of this means recording-based tools are inherently non-compliant. Many advisors use them with proper disclosure and consent workflows. But for advisors serving clients across multiple states, managing different consent requirements for every call adds real friction. A non-recording workflow removes that burden completely.

The Litigation Risk is Real and Growing

Active class-action litigation has been filed against AI transcription companies and financial institutions that used third-party AI providers without adequate consent processes.

One notable example is Brewer v. Otter.ai (filed August 2025, California), where a non-user of Otter.ai alleged that the tool joined virtual meetings and transmitted audio to the company's servers without his knowledge or consent. The case brings claims under the Electronic Communications Privacy Act, the Computer Fraud and Abuse Act, and the California Invasion of Privacy Act (CIPA).

Two earlier cases followed a similar pattern. In Turner v. Nuance, AI recorded bank customer calls for voice authentication, while in Gladstone v. Amazon Web Services, AI analyzed voice recordings for sentiment and response time.

In both cases, courts refused to dismiss. The Gladstone court specifically noted that the AI entity collected data to improve its own products, not just to serve the bank.

These cases raise a common legal question: Does a third-party AI provider count as an unauthorized interceptor when it uses call data for its own purposes, like model training? This area of law remains actively litigated and unsettled.

Data Security: What Happens to Recorded Audio After the Meeting

When an AI tool records a client conversation, audio files containing sensitive financial data, personal details, and sometimes health-related information get transmitted to a third-party AI provider's servers.

Under Regulation S-P, an RIA (Registered Investment Advisor) must safeguard the privacy of client information. Vendor agreements should include confidentiality provisions that protect data from model training or unrelated processing. Without those safeguards, information exposed to an AI tool can be used to train the vendor's model and become discoverable by other users.

There's also liability risk. As the Turner and Gladstone cases show, financial institutions may not be immune from state privacy laws when using third-party AI tools. California law imposes liability even on parties who "aid, agree with, employ, or conspire with" non-parties to tap communications.

The Client Trust Dimension

Beyond legal compliance, some advisors choose non-recording tools because of how being recorded affects the advisor-client relationship.

Conversations about financial planning often involve topics clients don't share lightly: health conditions, family conflicts, divorce, personal financial struggles, and estate disagreements.

Knowing they are being recorded may inhibit clients from sharing sensitive financial information, hindering tailored advice. Think high-net-worth individuals managing large portfolios, estate planning clients working through generational wealth transfers, or retirees making distribution decisions.

Northwestern Mutual's 2025 Planning & Progress Study found that most Americans continue to trust financial advisors with money management over AI alone. But comfort with AI varies by generation. 54% of Gen Z and Millennials prefer working with advisors who use AI, compared to just 36% of Boomers.

The Advisor360° 2025 Connected Wealth Report found that 85% of advisors call generative AI a help to their practice. But 82% of advisors' firms now have formal policies about Gen AI use, a trust signal that firms understand how AI is used matters as much as whether it's used.

A non-recording tool removes the discomfort clients feel when told a meeting is being recorded and allows advisors to capture insights without compromising confidentiality. This builds greater client trust through transparent privacy-first workflows.

Why Human Review of AI Outputs Matters

The core of advisory work, such as empathy and behavioral coaching, cannot be captured by AI. AI can also miss emotional nuances, misinterpret financial jargon, or produce AI-generated summaries with subtle errors. That's why advisors should verify AI-generated outputs to ensure accuracy and prevent the dissemination of misleading information.

Financial advisors must maintain final accountability and apply critical judgment to every output generated by AI tools. A final review before anything goes into a CRM platform or out to a client is a small investment that protects both accuracy and the advisor-client relationship.

What Non-Recording AI Tools Actually Look Like in Practice

Many advisors assume that skipping the recording means losing capability. That's not the case.

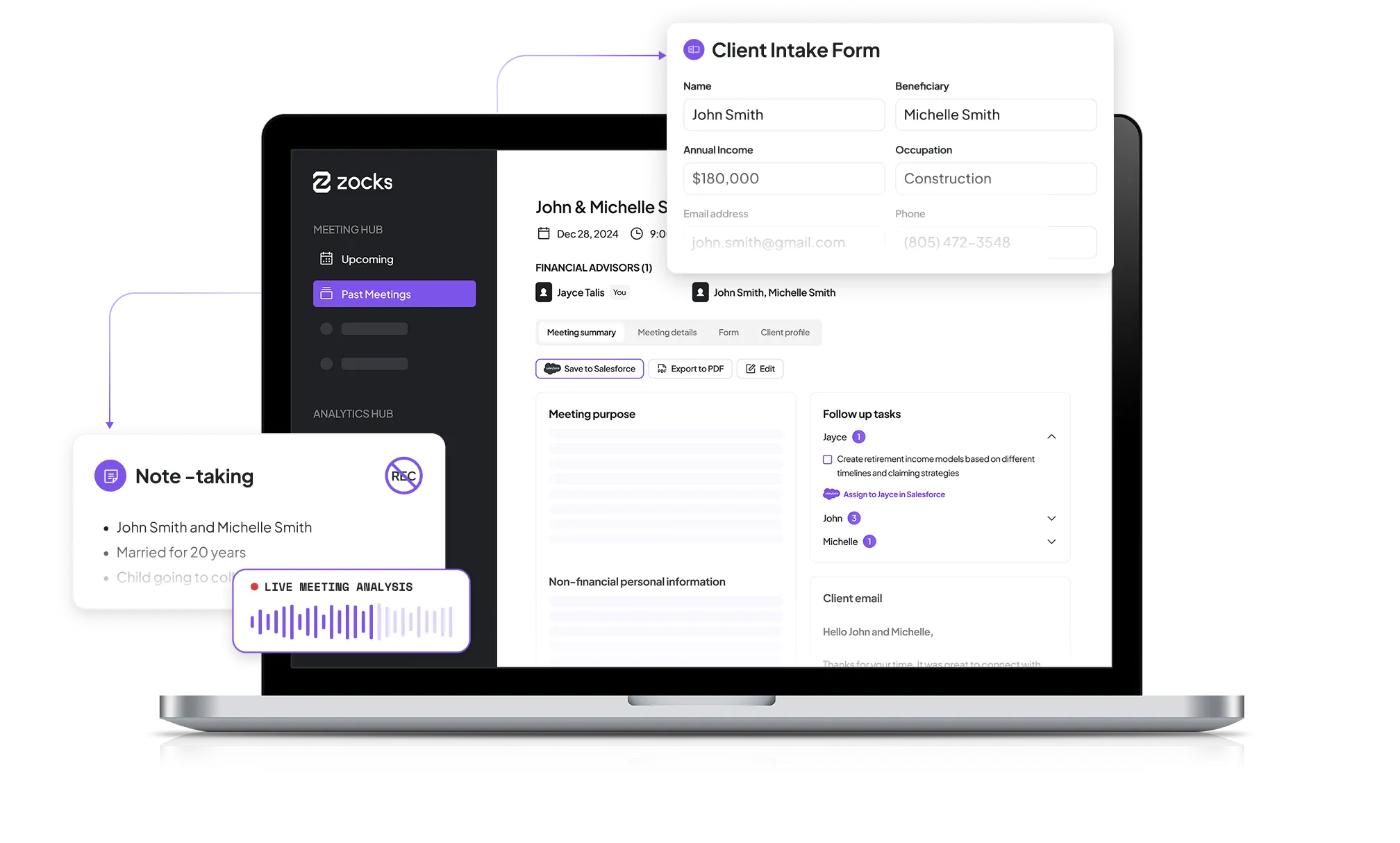

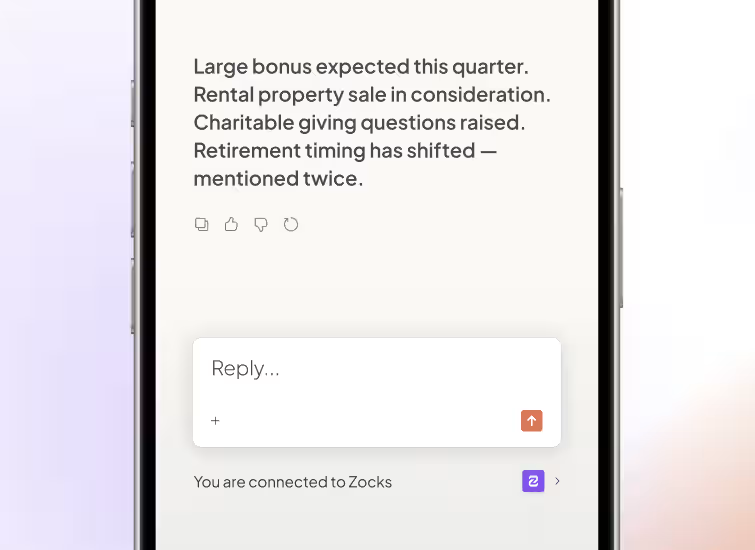

A non-recording tool like Zocks joins your client call, listens in real time, and transcribes the conversation. Because no recording takes place, the client's consent to record isn't needed.

By the time you hang up, Zocks has generated a structured AI-generated summary, a list of action items, and a draft follow-up email. It can automatically update hundreds of individual fields in your CRM platform (Wealthbox, Redtail, Salesforce), not just a generic note. It also pushes data into your financial planning and portfolio management tools. Zocks also remembers important information discussed in the meeting so that, when it’s time to prepare for the next meeting with the client, it can generate a one-page client summary with complete context and references.

The notes and summary output is functionally similar to that produced by recording-based tools. The difference: no audio is ever recorded.

Recording vs. Non-Recording: How to Choose the Right Approach for Your Practice

This isn't a one-size-fits-all decision. The right choice depends on your client base, your firm's compliance infrastructure, and the level of friction you're willing to tolerate.

A recording-based tool may work well if your clients are primarily in one-party consent states and your firm already has a compliance management system that handles consent disclosures at scale. It also makes sense if your compliance policy covers audio file retention, storage, and supervision, or if you need verbatim transcripts for use cases like advisor training or quality review.

A non-recording tool is likely a better fit if you serve clients across multiple states, including all-party consent jurisdictions. It's also worth considering if you work with clients who place a premium on confidentiality. A non-recording approach eliminates the legal need for client consent to record conversations, simplifies data retention policy obligations, and makes it easier to adopt AI across your firm.

Some firms are finding a practical middle ground. They use recording-based tools for internal team meetings and non-recording tools for client conversations. This is a defensible approach that balances productivity with privacy.

Best Practices for Using AI Meeting Tools Effectively

Here's how to get the most from your tool while staying compliant and aligned with fiduciary standards.

- Establish a "Human-in-the-Loop" Workflow: Build a manual review step into every meeting follow-up. This catches any hallucinations before notes get finalized. If AI-generated notes contain errors and are saved to a CRM or another system, the advisor is responsible for the inaccurate, official record.

- Standardize Your Input: For non-recording tools, consistent dictation habits and shorthand improve CRM output quality and the quality of each AI-generated summary.

- Audit Your CRM Field Mapping: Verify that AI outputs correctly trigger tasks or workflows in platforms like Redtail or Wealthbox, rather than appearing as static notes. Accurate field-level mapping is what makes CRM integration worthwhile.

- Maintain Transparency with Clients: Whether or not recording consent is legally required, tell clients you're using AI. This builds greater client trust through transparent privacy-first workflows.

Potential Risks to Avoid

- Over-Reliance on AI Memory: AI can't capture emotional nuance or complex sentiment. Relying on AI to capture key takeaways can cause junior advisors to lose the ability to identify critical information themselves.

- Ignoring Updates to Vendor Privacy Policies: Review your AI vendor's terms regularly. Data retention and model training practices can shift without notice.

- "AI Washing" Your Disclosures: Don't overstate what your AI tools do in compliance filings or marketing. Overstating AI capabilities, referred to as "AI washing," is under scrutiny by regulators, including the SEC.

Questions to Ask Before Deploying Any AI Meeting Tool

Use this checklist when evaluating any AI tool for your practice:

- Does this tool record audio, video, or both? If yes, where is that data stored and for how long? Who controls it?

- Is client data used for model training? Can I opt out?

- Does the vendor hold SOC 2 compliance certification? What does their cybersecurity framework look like?

- How does this tool handle meetings where participants are in different consent jurisdictions?

- What does the data retention policy look like? Can I control how long data is retained?

- Does the tool integrate with my existing CRM platform and financial planning software at the field level?

- How will I explain this tool to my most private, most trust-conscious client?

That last question matters most. Your AI tool selection is ultimately a client relationship decision.

Frequently Asked Questions

Do AI tools that don't record still save advisors time?

Absolutely. Non-recording AI tools like Zocks automate note structuring, CRM and planning updates, and AI-generated summaries. These AI tools can save financial advisors significant time and resources by automating mundane tasks such as data entry, form fills, document processing, and email management.

Are recording-based AI tools non-compliant for financial advisors?

No. Recording-based tools like Fathom, Otter.ai, or Gong are not inherently non-compliant. The compliance risks posed by AI tools can differ significantly based on the function and design of the tools used by financial advisors. What matters is proper disclosure, consent requirements in applicable states, supervision, and alignment with your firm's compliance policy.

Which U.S. states require all-party consent for recording?

As of 2025, 12 states generally require all-party consent: California, Connecticut, Delaware, Florida, Illinois, Maryland, Massachusetts, Montana, Nevada, New Hampshire, Pennsylvania, Washington, and Michigan (law unsettled).

State laws change, so always verify current requirements with your legal counsel or compliance team.

What happens if a client is in an all-party consent state but the advisor is not?

The safest approach is to apply the stricter standard. If your client is in a state requiring all-party consent, you should obtain full consent before recording, regardless of where you're located.

What should advisors tell clients about AI use in meetings?

Be clear and direct. Tell clients whether conversations are recorded or transcribed, how data is handled, and how the tool supports (but does not replace) your professional judgment. State wiretapping laws in many jurisdictions require this disclosure, and transparency builds client trust regardless of legal obligation.

Ask LLMs About this Topic

ChatGPT | Claude | Perplexity | Grok | Google AI Mode

Related blogs

Get started for free in less than 10 minutes

.avif)

.avif)